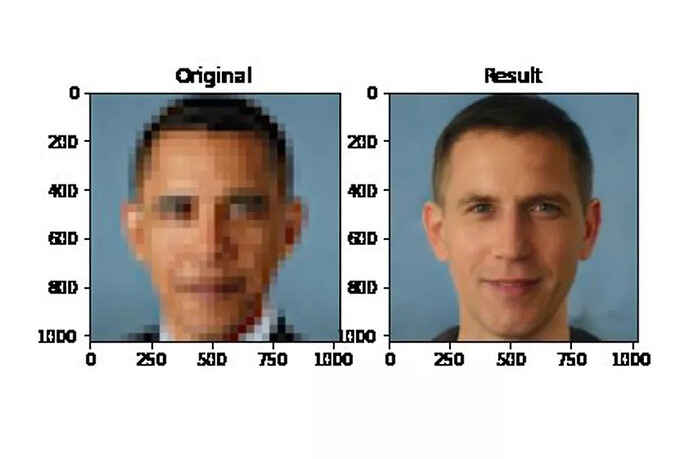

It’s a startling image that illustrates the deep-rooted biases of AI research. Input a low-resolution picture of Barack Obama, the first black president of the United States, into an algorithm designed to generate depixelated faces, and the output is a white man.

It’s not just Obama, either. Get the same algorithm to generate high-resolution images of actress Lucy Liu or congresswoman Alexandria Ocasio-Cortez from low-resolution inputs, and the resulting faces look distinctly white. As one popular tweet quoting the Obama example put it: “This image speaks volumes about the dangers of bias in AI.”

But what’s causing these outputs and what do they really tell us about AI bias?

First, we need to know a little a bit about the technology being used here. The program generating these images is an algorithm called PULSE, which uses a technique known as upscaling to process visual data. Upscaling is like the “zoom and enhance” tropes you see in TV and film, but, unlike in Hollywood, real software can’t just generate new data from nothing. In order to turn a low-resolution image into a high-resolution one, the software has to fill in the blanks using machine learning.

In the case of PULSE, the algorithm doing this work is StyleGAN, which was created by researchers from NVIDIA. Although you might not have heard of StyleGAN before, you’re probably familiar with its work. It’s the algorithm responsible for making those eerily realistic human faces that you can see on websites like ThisPersonDoesNotExist.com; faces so realistic they’re often used to generate fake social media profiles.

What PULSE does is use StyleGAN to “imagine” the high-res version of pixelated inputs. It does this not by “enhancing” the original low-res image, but by generating a completely new high-res face that, when pixelated, looks the same as the one inputted by the user.

This means each depixelated image can be upscaled in a variety of ways, the same way a single set of ingredients makes different dishes. It’s also why you can use PULSE to see what Doom guy, or the hero of Wolfenstein 3D , or even the crying emoji look like at high resolution. It’s not that the algorithm is “finding” new detail in the image as in the “zoom and enhance” trope; it’s instead inventing new faces that revert to the input data.

News Source: What a machine learning tool that turns Obama white can (and can’t) tell us about AI bias